library(here)

library(amt)

library(sf)

library(lubridate)

library(ggplot2)

library(dplyr)

library(suncalc)

library(amt)

library(ctmm)

# devtools::install_github("pratikunterwegs/atlastools")

library(atlastools)Power analysis

Figure: Workflow for evaluating population sample sizes while balancing trade-offs with sampling parameters (Porteus et al. - 2023).

Defining research targets

We aim to assess the potential impact of invasive predators (European red foxes, Ferral cat) on Australian shorebirds, whether they are overlapping their home ranges in time and space.

Several questions are defined:

(i) assess space-use of European red foxes and Feral cats depending tidal and circadian cycles in the local estuaries

(ii) assess space-use of shorebirds depending tidal and circadian cycles in the local estuaries

(iii) compare and test whether shorebird and invasive predators space-use overlap in space and time

Selecting existing data

Now we established our research targets, the next step is to select the appropriate datasets to extract individual- and population-level parameters. So we can simulate a synthetic case that we will use to assess our sample features requirement to design at the best the method we will implement on the ground in the real world.

We selected a data set available and open access, acquired on European red foxes (Vulpes vulpes) into the United Kingdom within wetlands and near peri-urban areas. This is very close to our study case since our foxes would be evolving in habitats near located around the estuaries, across wetlands and also bordered by urban areas.

Load the data in your R environment accessible on movebank:

Porteus TA, Short MJ, Hoodless AN, Reynolds JC. 2024. Data from: Study “Red Fox (Vulpes vulpes) in UK wet grasslands”. Movebank Data Repository. https://doi.org/10.5441/001/1.304

From the study Porteus et al. 2024.

Packages

Formatting data

Let’s open, format and have a quick look on the data before all.

data_csv <- read.csv(here::here("qmd", "chapter_5", "data", "fox_uk_test.csv"))

data_all <- data_csv %>%

mutate(timestamp = ymd_hms(timestamp)) %>%

make_track("utm.easting",

"utm.northing",

timestamp,

individual.local.identifier, # keep indiv.ID

gps.hdop, # keep location error metric (horizontal dilution-of-precision)

crs = 32630) # EPSG for UTM zone 30N

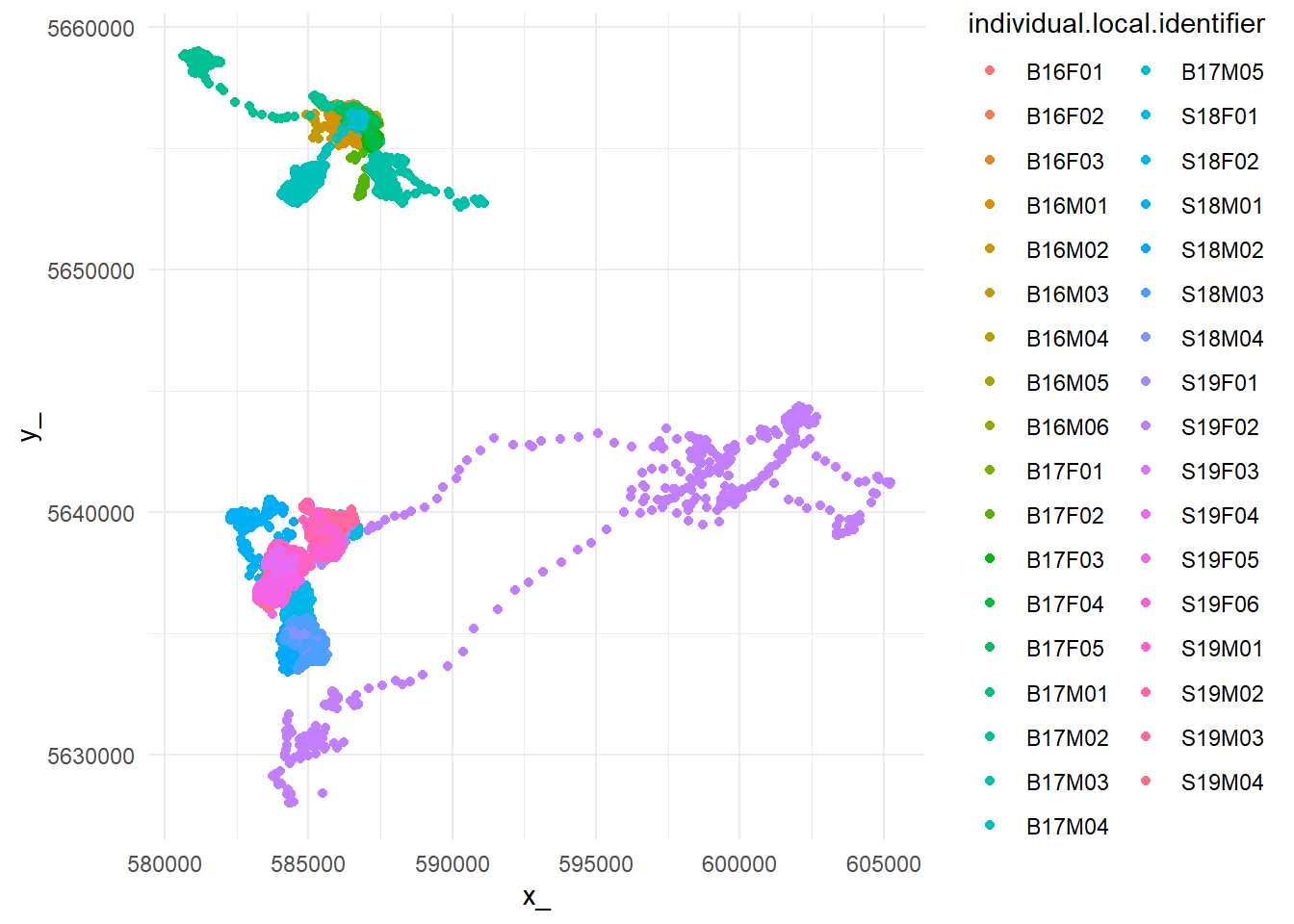

# Quick plot

ggplot(data_all, aes(x_,

y_,

color = individual.local.identifier)) +

geom_point() +

theme_minimal()

Filtering outliers

(Gupte et al. - 2021 & documentation; Jonsen et al. - 2023).

We also filter individuals to keep only 5 so the analysis doesn’t take too long to run.

# Extract movement parameters

data_all <- data_all %>%

mutate(

speed_in = atl_get_speed(.,

x = "x_",

y = "y_",

time = "t_",

type = "in"),

speed_out = atl_get_speed(.,

x = "x_",

y = "y_",

time = "t_",

type = "out"),

angle = atl_turning_angle(.,

x = "x_",

y = "y_",

time = "t_") )

# Filter unrealistic movement

# Adjust S (= 10) based on Porteus & al. (2023) findings

data <- data_all %>%

dplyr::filter(speed_in < 10 | speed_out < 10)

# Median smoothing

# I choose to not doing it because I want keep real observed values and not compute somoothed ones

# Thinning movement tracks

# No need here but might be in real analysis

# Keep only 5 individuals to save time in procedures

data <- data %>%

filter(individual.local.identifier %in%

unique(data_all$individual.local.identifier)[1:5])Verifying residency assumption

Fleming et al. - 2014 Calabrese et al. - 2016

We must be sure every single tracked individuals are residential and no vagrant, so we can assess a home range.

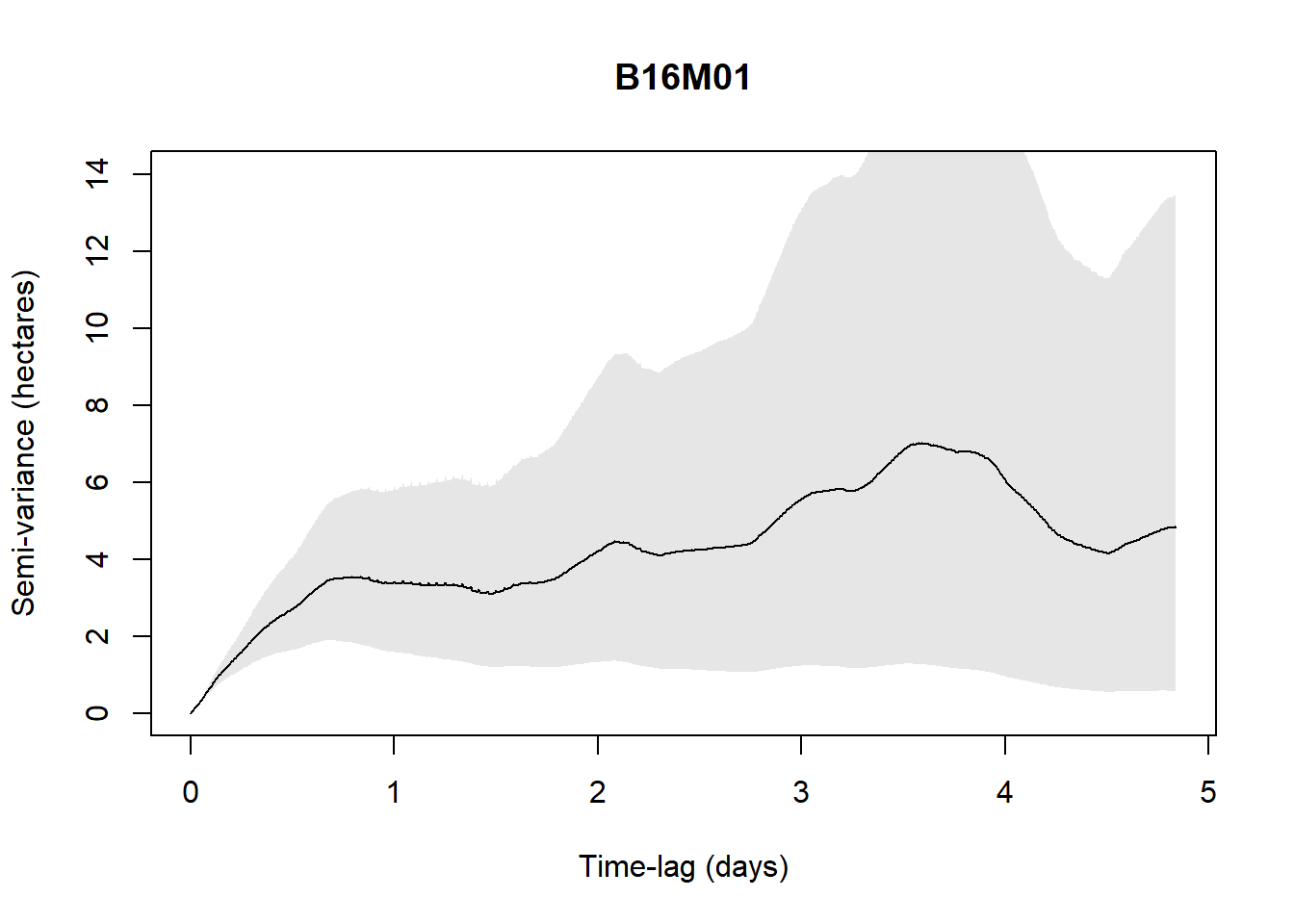

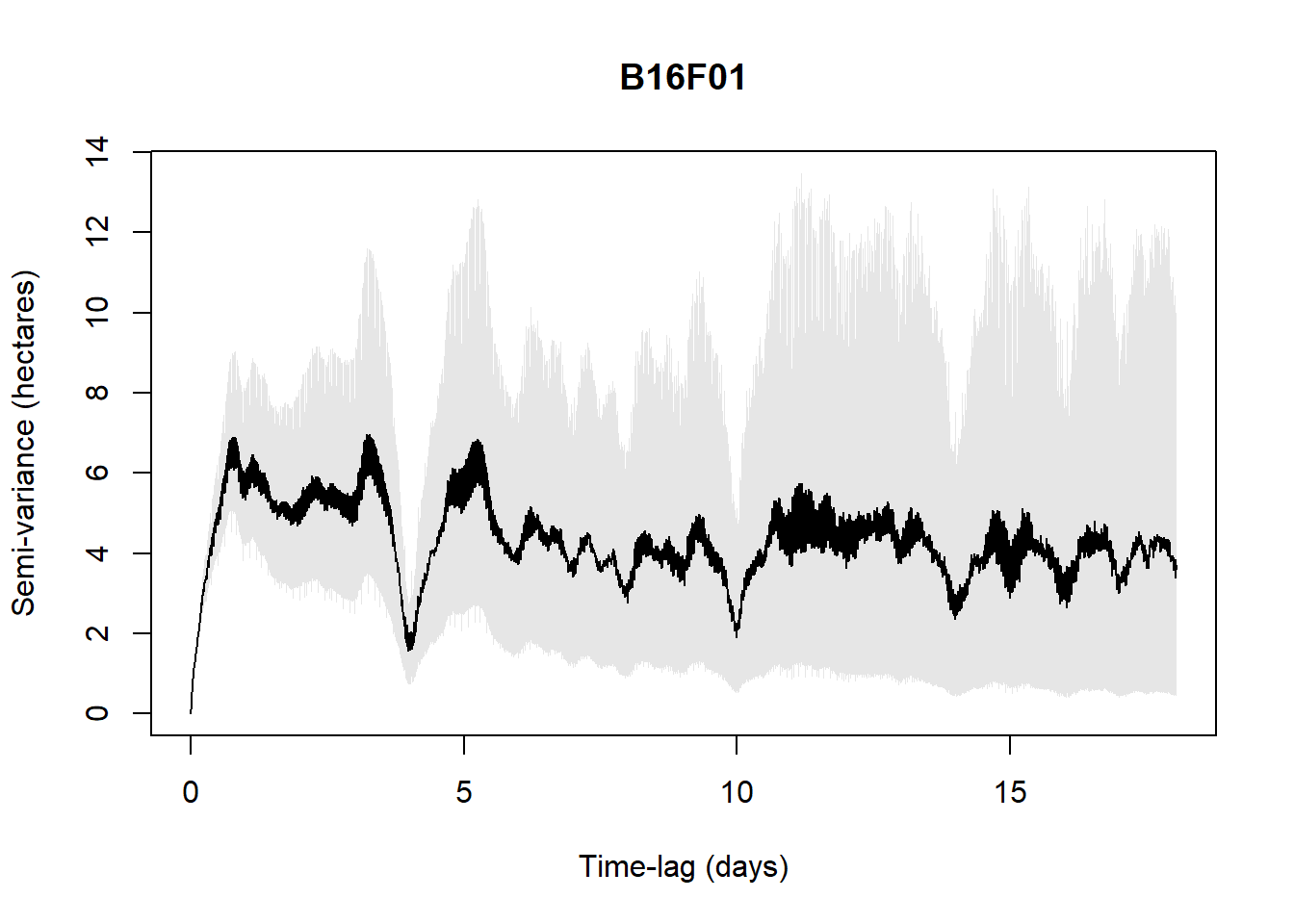

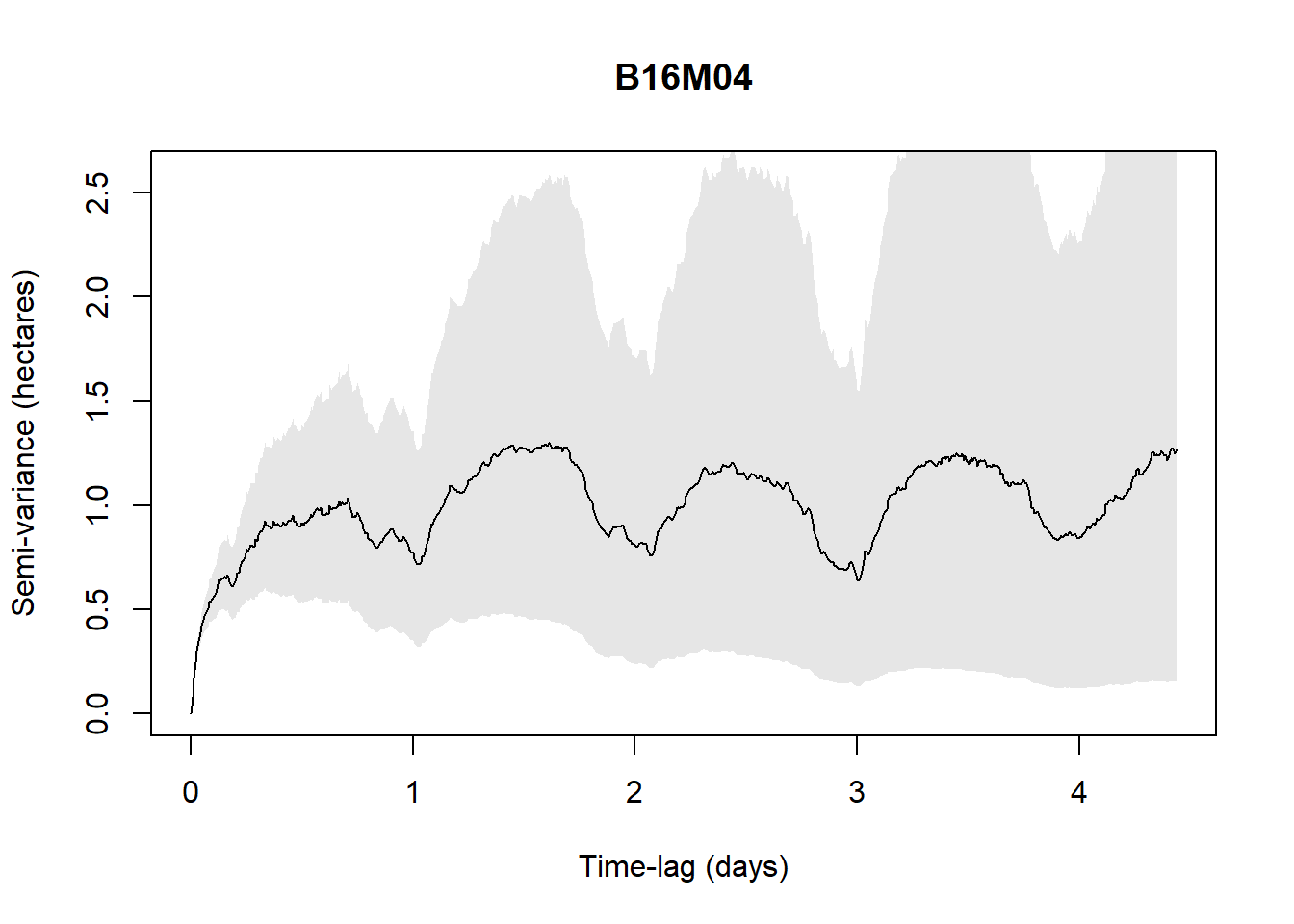

An easy way to do this is to plot a variogram, essentially a means of visualizing time-lag-dependent behaviors in the data and thus informative to look at.

For example, with the available data (5 individuals only are checked to save time in the process), when the below figure shows a plateau, it indicates range residency, otherwise the individual is non-stationary (i.e., B16M02).

# Format for ctmm

data_ctmm <- data %>%

rename(

x = x_,

y = y_,

timestamp = t_,

individual.local.identifier = individual.local.identifier) %>%

as.data.frame()

data_ctmm <- as.telemetry(data_ctmm,

projection = "+proj=utm +zone=30 +datum=WGS84")

# Split by individual

individuals <- unique(data$individual.local.identifier)

# Loop over individuals (AI generated)

results <- list()

for (id in individuals) {

cat("Processing:", id, "\n")

# Subset telemetry for this individual

data_ctmm_indiv <- data_ctmm[[id]]

# Plot variogram to visually inspect range residency

vg <- variogram(data_ctmm_indiv)

plot(vg, main = id)

# Fit candidate models

GUESS <- ctmm.guess(data_ctmm_indiv, interactive = FALSE)

fits <- ctmm.select(data_ctmm_indiv, GUESS, verbose = TRUE)

results[[id]] <- fits

}Processing: B16M01

Processing: B16M02

Processing: B16M03

Processing: B16F01

Processing: B16M04

Beyond visually validating the residency assumption, we can infer the data with movement model selections to verify the assumption:

IID (Independent and Identically Distributed) — The simplest model, assuming all locations are independent with no temporal autocorrelation. Essentially treats the animal as if it teleports randomly within its range. If this is the best model, your sampling rate is too coarse to detect any movement autocorrelation.

BM (Brownian Motion) — The animal diffuses randomly with no tendency to return to any area. Non-stationary, meaning the animal has no home range — it just keeps wandering indefinitely. A red flag for home range estimation.

OU (Ornstein-Uhlenbeck) — Adds a central tendency (home range attraction) to Brownian motion. The animal wanders but is pulled back toward a center, producing a stable home range. Positions are auto-correlated but velocities are not — meaning the model assumes instantaneous direction changes, which is biologically unrealistic at fine sampling scales.

OUF (Ornstein-Uhlenbeck with Foraging/velocity) — Extends OU by also auto-correlating velocities, meaning the animal moves in a more continuous, realistic trajectory with persistent direction before eventually returning to its range center. The most biologically realistic model for GPS data collected at fine temporal resolution. τF captures the timescale of this velocity autocorrelation (roughly the foraging or transit timescale), while τH captures the home range timescale.

OUf (Ornstein-Uhlenbeck with fine-scale autocorrelation) — Similar to OUF but with a different parameterisation of the velocity process, capturing very fine-scale movement persistence. When this model wins, it often suggests the sampling interval is very short relative to the animal’s movement timescales.

Anisotropic variants — Any of the above models can be anisotropic, meaning the home range or movement is not assumed to be circular but can be elliptical, capturing directional biases in space use such as a range stretched along a valley or coastline.

For example, in the below results, with the available data (5 individuals only are checked to save time in the process), all five individuals are range-resident with OUF or OUf model selected. Only B16F01 is ambiguous with AIC < 1 for several models. But M1603 which was visually not indicating a plateau is indicated here as a resident.

Depending on our knowledge related to the species and its system, we might subjectively filter individuals.

# Extract best fit model per individuals

best_fits <- lapply(results, function(f) f[[1]])

# Check best model for each individual

for (id in individuals) {

cat("\n--- Individual:", id, "---\n")

print(summary(results[[id]]))

}Reminder for the output values:

ΔAICc — Difference in AICc (corrected Akaike Information Criterion) relative to the best model. Values below 2 indicate models with comparable support, values above 7 indicate poor support. Always interpreted relatively — the model with ΔAICc = 0 is the best supported.

ΔRMSPE (m) — Difference in Root Mean Square Prediction Error in meters. Measures how well the model predicts withheld locations — a cross-validation metric. The model with ΔRMSPE = 0 has the best predictive accuracy. Note that the best AICc model and best RMSPE model don’t always agree, as AICc rewards parsimony while RMSPE rewards predictive fit.

DOF[area] — Degrees of freedom for the area estimate, roughly interpretable as the effective number of independent observations contributing to the home range estimate. Higher values mean a more reliable, better-constrained home range estimate. Very low values (e.g. < 5) suggest the home range estimate should be interpreted cautiously.

# Filter individuals which are non residents

data <- data %>%

dplyr::filter( !individual.local.identifier %in% c("B16M02"))

best_fits <- best_fits[!grepl("B16M02", names(best_fits))]Segmenting periods

Given our assumption of a stationary movement process (e.g., its statistical properties are constant in time), it may also be necessary to segment the dataset, by time or behavioral states, prior to parameter extraction.

Neglecting to address these issues 198 may result in parameter estimates drawn from an unrepresentative sample, undermining the reliability of downstream analyses.

Here, we will simply segment per day and night, acknowledged as the main drivers of fox behavior and movements.

# Add lat/lon to data (requirement for solar time)

coords_latlon <- data %>%

st_as_sf(coords = c("x_", "y_"), crs = 32630) %>%

st_transform(crs = 4326) %>%

st_coordinates() %>%

as.data.frame() %>%

rename(lon = X, lat = Y)

data <- data %>%

mutate(lon = coords_latlon$lon,

lat = coords_latlon$lat)

# Get sunrise and sunset for each unique date and location

data_filtered <- data %>%

mutate(date = as.Date(t_)) %>%

rowwise() %>%

mutate(

sun = list(getSunlightTimes(date = date,

lat = lat,

lon = lon,

keep = c("sunrise", "sunset"),

tz = "UTC")),

sunrise = sun$sunrise,

sunset = sun$sunset,

# Distinguish nocturnal and diurnal groups

diel = ifelse(t_ >= sunrise & t_ <= sunset,

"diurnal",

"nocturnal") ) %>%

ungroup() %>%

select(-sun) # remove list columnExtracting pilot parameters

tau_position <- median(sapply(best_fits, function(m) {

if(length(m) >= 13 && !is.null(m[[13]])) {

m[[13]]["tau position", "tau position"]

} else NA

}), na.rm = TRUE)

tau_velocity <- median(sapply(best_fits, function(m) {

if(length(m) >= 13 && !is.null(m[[13]])) {

m[[13]]["tau velocity", "tau velocity"]

} else NA

}), na.rm = TRUE)

sigma <- median(sapply(best_fits, function(m) {

if(length(m) >= 5 && class(m[[5]]) == "covm") {

mean(diag(m[[5]]@par))

} else NA

}), na.rm = TRUE)Specifying sampling parameters (duration and interval)

After defining study objectives and movement parameters, researchers select sampling models (duration and interval) to test through simulations. These simulations can incorporate real-world sources of bias, including:

Location error – inaccuracies in recorded positions, critically affecting distance and speed estimation.

Data loss – missing locations due to low fix-success rates or temporary signal issues.

Deployment disruption – premature recording stoppage caused by device malfunction, failures or animal mortality.

Storage limits – finite memory capacity of GPS units leading to incomplete datasets.

Location error is particularly important to address because, if it exceeds the true movement scale, it causes distance and speed estimates to inflate as sampling frequency increases.

USE THE APP

From here, we can use the movedesign app developed by Ines Silva:

- Silva, I., Fleming, C. H., Noonan, M. J., Fagan, W. F., & Calabrese, J. M. (2023). Movedesign: Shiny r app to evaluate sampling design for animal movement studies. Methods in Ecology and Evolution, 14(9), 2216–2225. https://doi.org/10.1111/2041-210X.14153

See also ctmm web application here.

# Meet app requirements

data_filtered <- data_filtered %>%

# rename(animal_ID = individual.local.identifier) %>%

select(animal_ID, t_, x_, y_,

# speed_in, speed_out, angle,

diel, gps.hdop) %>%

mutate(animal_ID = as.factor(animal_ID),

diel= as.factor(diel) ) %>%

group_by(animal_ID) %>%

slice_sample(n = 1000, replace = FALSE) %>%

ungroup()

# Save and load in the app the filtered/segmented dataset

readr::write_csv(data_filtered,

here::here("qmd", "chapter_5", "data", "fox_uk_test_filtered.csv"))remotes::install_github("ecoisilva/movedesign", dependencies = TRUE)

library(movedesign)

movedesign::run_app()ALTERNATIVE

If you want to control each step or if the app doesn’t work, find here below a simplified workflow to implement the same ultimate results: how many devices should I deploy on the ground to run a robust and accurate home range analysis?

species <- "Vulpes vulpes"

sampling_int <- 15 # minutes between fixes

fix_success <- 0.75 # 75% fix success rate

deployment_dur <- 12 # months (battery life)

loc_error <- 5 # meters (GPS accuracy)

storage_limit <- 1900000 # number of fixes storage limit

dropout_rate <- 0.15 # 15% deployment disruption

# Effective fixes per individual after accounting for fix success and dropout

fixes_per_day <- (60 / sampling_int) * 24 * fix_success

effective_days <- (deployment_dur * 30.4) * (1 - dropout_rate)

total_fixes <- fixes_per_day * effective_daysAssociated to the existing data and regarding the parameters specified, there is 2.2326^{4} effective fixes per individual after accounting for success of fixes collection and failure in devices. Providing 2.232576^{4} observations out of 1.9^{6}.

Simulating sampling design

# Build population mean model from pilot parameters

mean_model <- ctmm(

tau = c(tau_position, tau_velocity),

sigma = sigma,

isotropic = TRUE)# Time sequence based on design

dt <- sampling_int * 60 # seconds

t_seq <- seq(0, round(total_fixes) * dt,

by = dt)

n_range <- c(2, 4, 6, 10, 15, 20)

n_sims <- 5

target_cv <- 0.25 # Private choice to use most of the computer cores so it runs multiple indiv at once

library(parallel)

n_cores <- detectCores() - 2

# Simulation

results_power <- data.frame()

for(n in n_range){

cat("Simulating n =", n, "individuals...\n")

cv_list <- c()

for(s in 1:n_sims){

hr_estimates <- c()

for(ind in 1:n){

tryCatch({

# Simulate track from mean model

sim_track <- simulate(mean_model,

t = t_seq,

error = loc_error)

# Fit model

sim_guess <- ctmm.guess(sim_track, mean_model,

interactive = FALSE)

sim_fit <- ctmm.fit(sim_track, sim_guess)

# AKDE home range estimate

sim_akde <- akde(sim_track, sim_fit)

hr_estimates <- c(hr_estimates,

summary(sim_akde)$CI[2] / 1e6)

}, error = function(e) {

cat("Simulation failed, skipping...\n")

})

}

if(length(hr_estimates) >= 2){

cv_list <- c(cv_list,

sd(hr_estimates) / mean(hr_estimates))

}

}

results_power <- rbind(results_power, data.frame(

n_individuals = n,

mean_cv = mean(cv_list),

sd_cv = sd(cv_list)

))

}

for(i in 1:3) {

beepr::beep(sound = 2)

Sys.sleep(0.5)

}

# Save entire R environment

save.image(here::here("qmd", "chapter_5", "data", paste0(Sys.Date(),"_power_simulation", "_nsim_", n_sims,"_ranging_", paste(n_range, collapse = "_"), ".RData")))

n_individuals mean_cv sd_cv

1 2 0.1996281 0.12628533

2 4 0.3936213 0.20640016

3 6 0.4451786 0.22541798

4 10 0.4137192 0.05786964

5 15 0.4698869 0.07766856

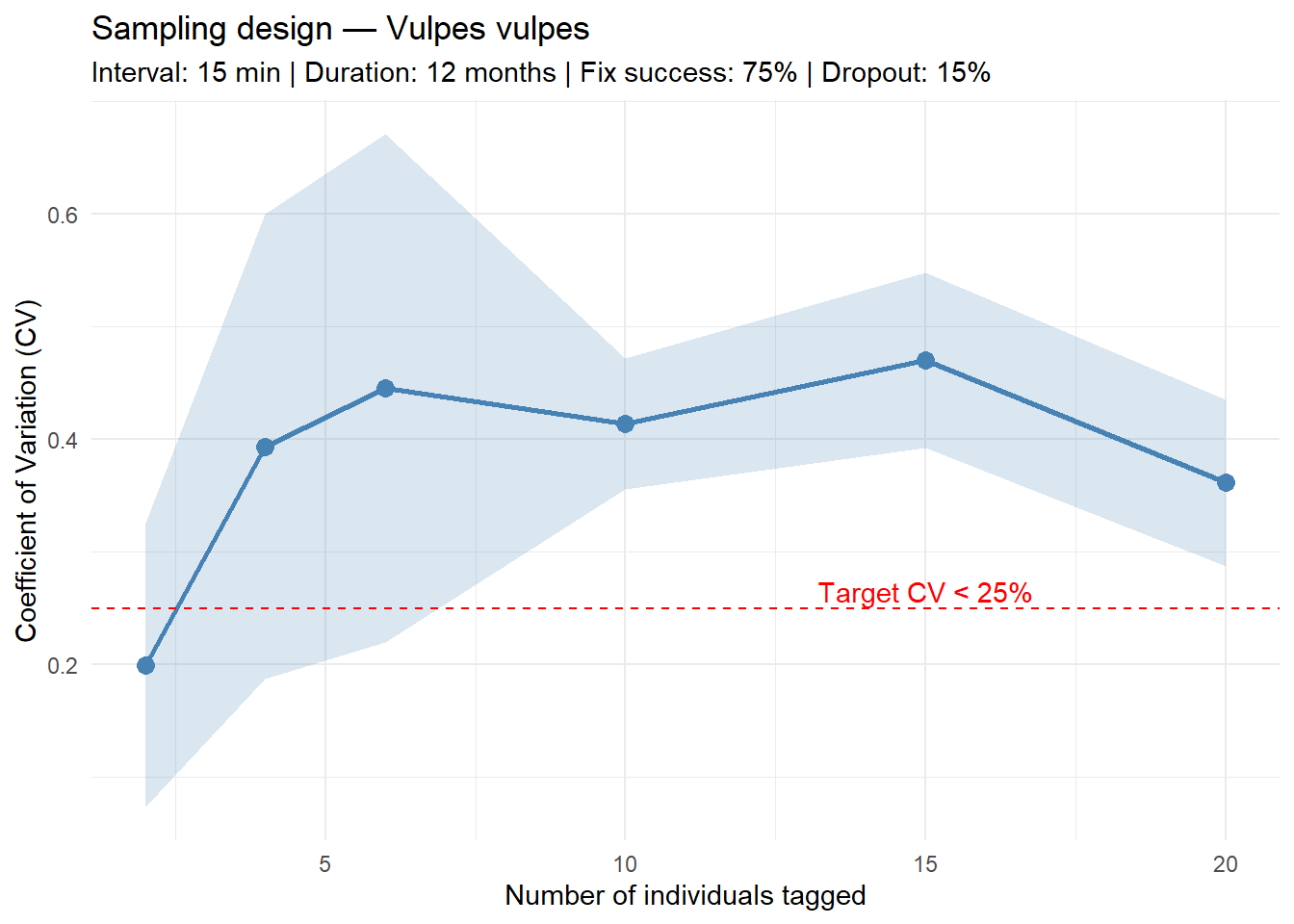

6 20 0.3612467 0.07377829Based on the power analysis using pilot movement parameters extracted from 5 Vulpes vulpes individuals, and assuming a sampling interval of 15 minutes, a deployment duration of 12 months, a fix success rate of 75%, and a 15% deployment disruption rate, the following recommendations apply:

Minimum number of individuals: We could not determine the minimum number of individuals required to achieve the analysis with a coefficient of variation ≤ 25% in home range estimate

Sampling interval: one fix every 15 minutes, yielding 72 fixes per individual per day after accounting for 75% fix success rate

Sampling period: 12 months, (310 effective days after accounting for 15% deployment disruption)

Expected fixes per tag: 2.2326^{4} locations over the deployment period

Storage check: 2.2326^{4} fixes per tag, well within the 1,900,000 fix storage limit

These recommendations are derived from 5 simulations per sample size scenario, using population-level movement parameters (τposition = 764.1 h, τvelocity = 0.07 h) estimated from the pilot dataset.